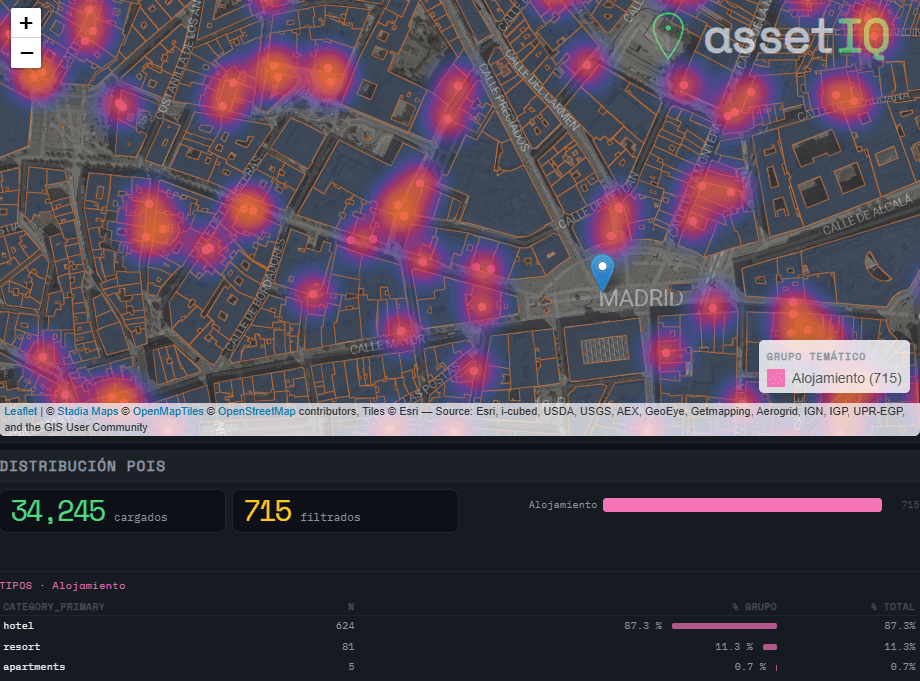

When analysing urban assets, there is genuine value in moving beyond generic neighborhood scores. The density of a coffee shop cluster, the proximity to a financial hub, or the concentration of accommodation around a transport node are signals that traditional datasets flatten into averages — or ignore entirely. assetIQ was built to change that. assetIQ is an R application powered by DuckDB and Overture Maps that extracts, classifies, and scores Points of Interest (POIs) for any location on Earth. You define a city and a search radius — from 100 meters to 25 kilometers — and the tool queries the Overture Maps Places dataset in real time, classifying each POI into thematic groups: Food & Drink, Retail, Health, Education, Transport, Accommodation, Financial Services, Leisure & Culture, Sport, and more.

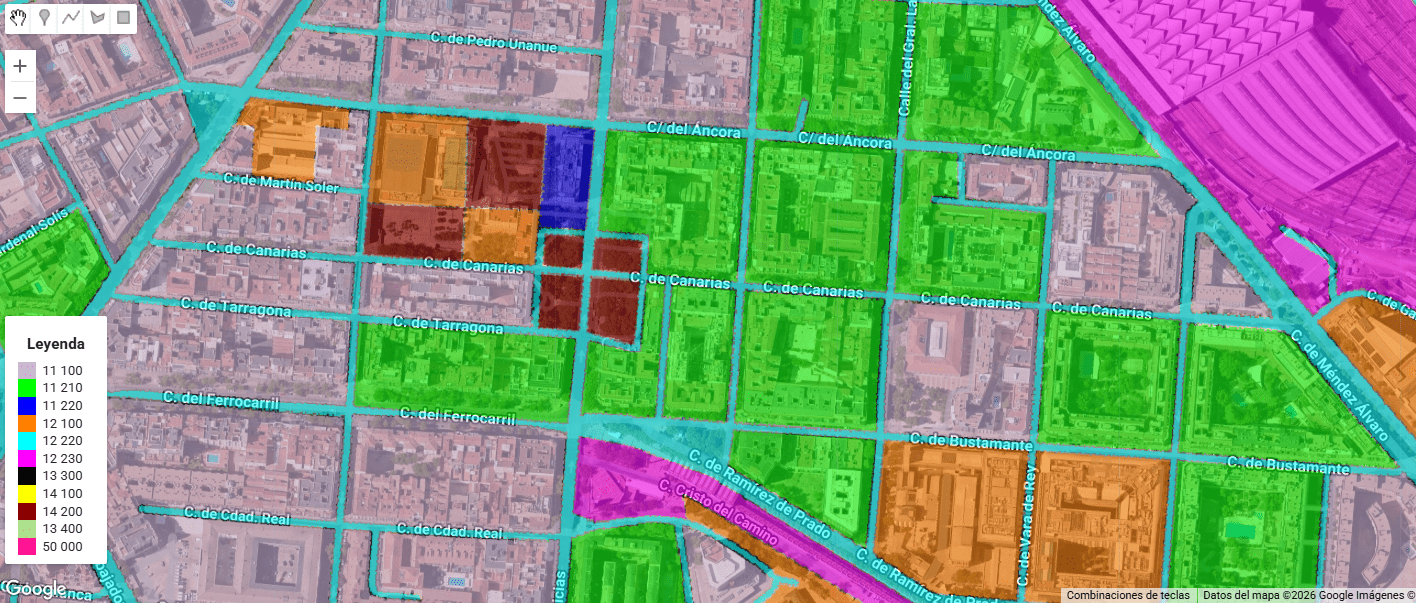

The core output is an attribute value called POIQ: a normalized 0–1 score assigned to every building footprint within the area of interest, derived from a Kernel Density Estimation of the selected thematic group. A building in a dense retail corridor scores close to 1. An isolated residential block far from any commerce scores close to 0. This transforms thousands of individual points — which in raw form tell you very little — into a single, interpretable attribute per building, ready for downstream modelling, valuation, or site selection.