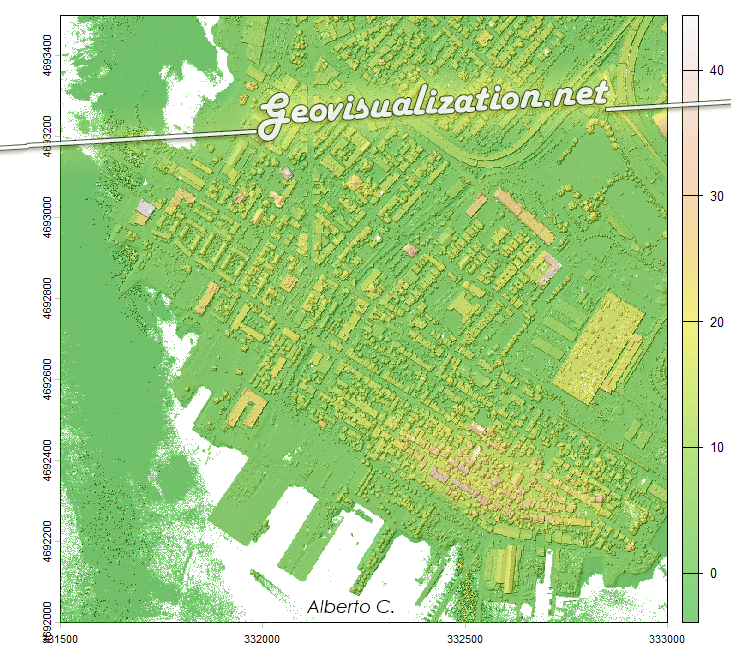

¿Alguna vez has querido visualizar el relieve de un territorio en 3D directamente desde R, sin depender de software GIS externo? Mapterhorn es un proyecto open source que distribuye modelos digitales de elevación (MDT) de alta resolución — hasta 2 metros en España — empaquetados en formato PMTiles, un estándar moderno que permite servir datos geoespaciales sin necesidad de un servidor propio.

En este post veremos cómo configurar Mapterhorn en R usando el paquete mapgl en Rstudio, que nos permite crear mapas interactivos con terreno 3D en pocas líneas de código. El resultado: visualizaciones como la que ves abajo, con sombreado de relieve (hillshade) generado directamente desde los datos de elevación del IGN.